【IMPORTANT】 To dear professor Alvaro: Please also check the file we uploaded on canvas. There's the code file(The code we upload in here is morel ike tutorial, no prompt and personal information), demo video and other detail pictures or problems

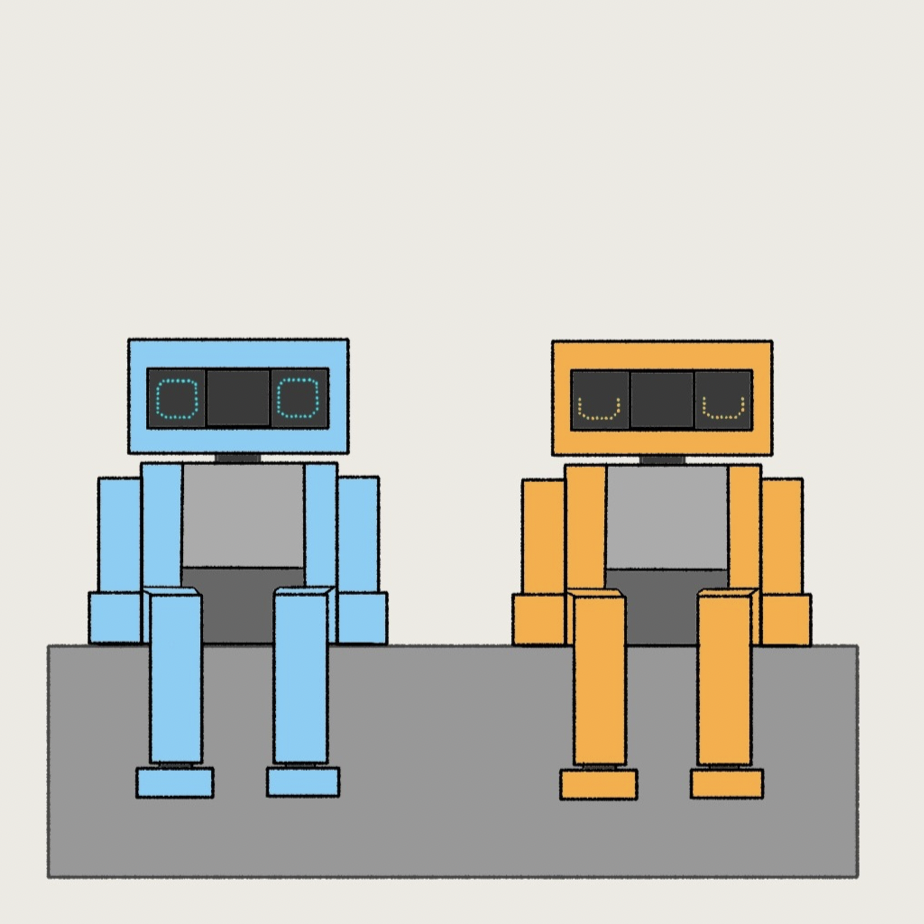

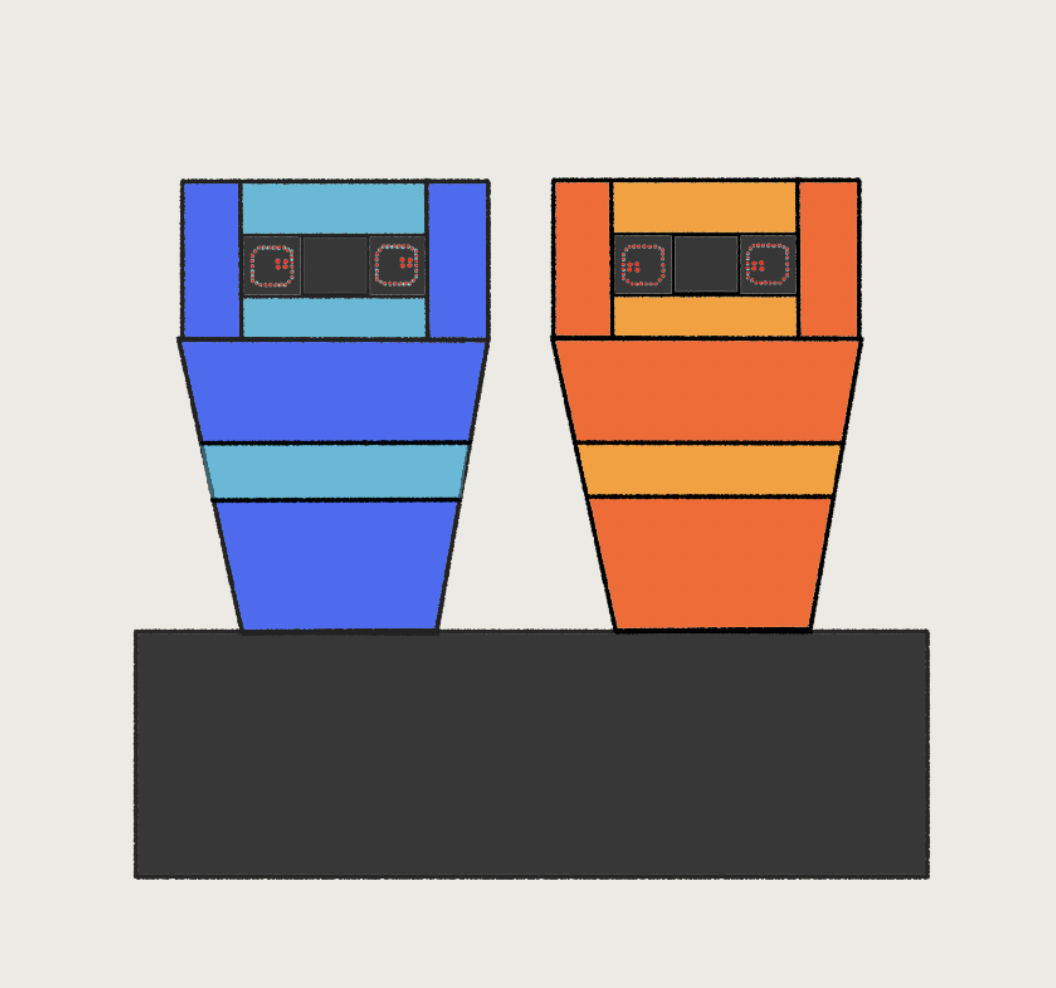

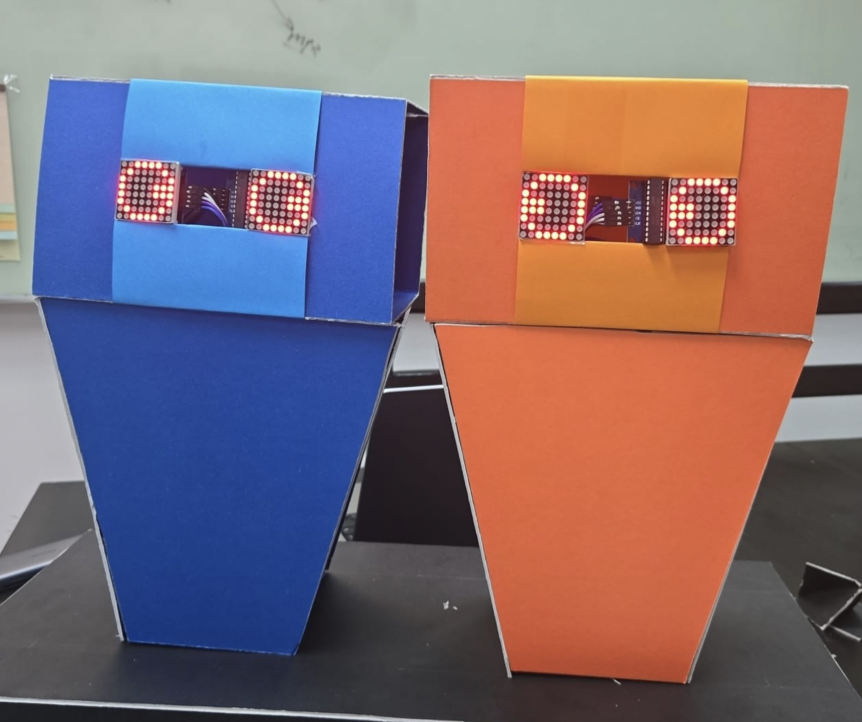

Our Arduino project is an art installation centered around two simple robots engaging in endless conversation, discussing and debating various topics such as existentialism, humanity, romance, and more. The purpose is to provoke thought and contemplation, stimulating intellectual curiosity through the observation of dialogue and exploration of these themes.

The initial idea stemmed from a brainstorming session where we considered creating a robot that could provide voice output responses based on the audience's input, aiming to offer support in a fun and interactive manner. However, after conducting further research and seeking consultation, we came up with a more intriguing and innovative concept. We decided to utilize Artificial Intelligence (AI) to generate responses and arguments, leading to the idea of two robots engaged in an endless conversation.

Due to resource and time constraints, we had to adapt our project to complete a preliminary version of the artwork. We supported each other throughout the process, with Quentin focusing on the main API AI text-to-speech conversation functions, as well as the hardware components (ESP32 and speaker), the creation of the demo video. Nielsen worked on the LED eyes function, presentations, proposals, written reports, material research, procurement, final video production, and the physical body of the robot.

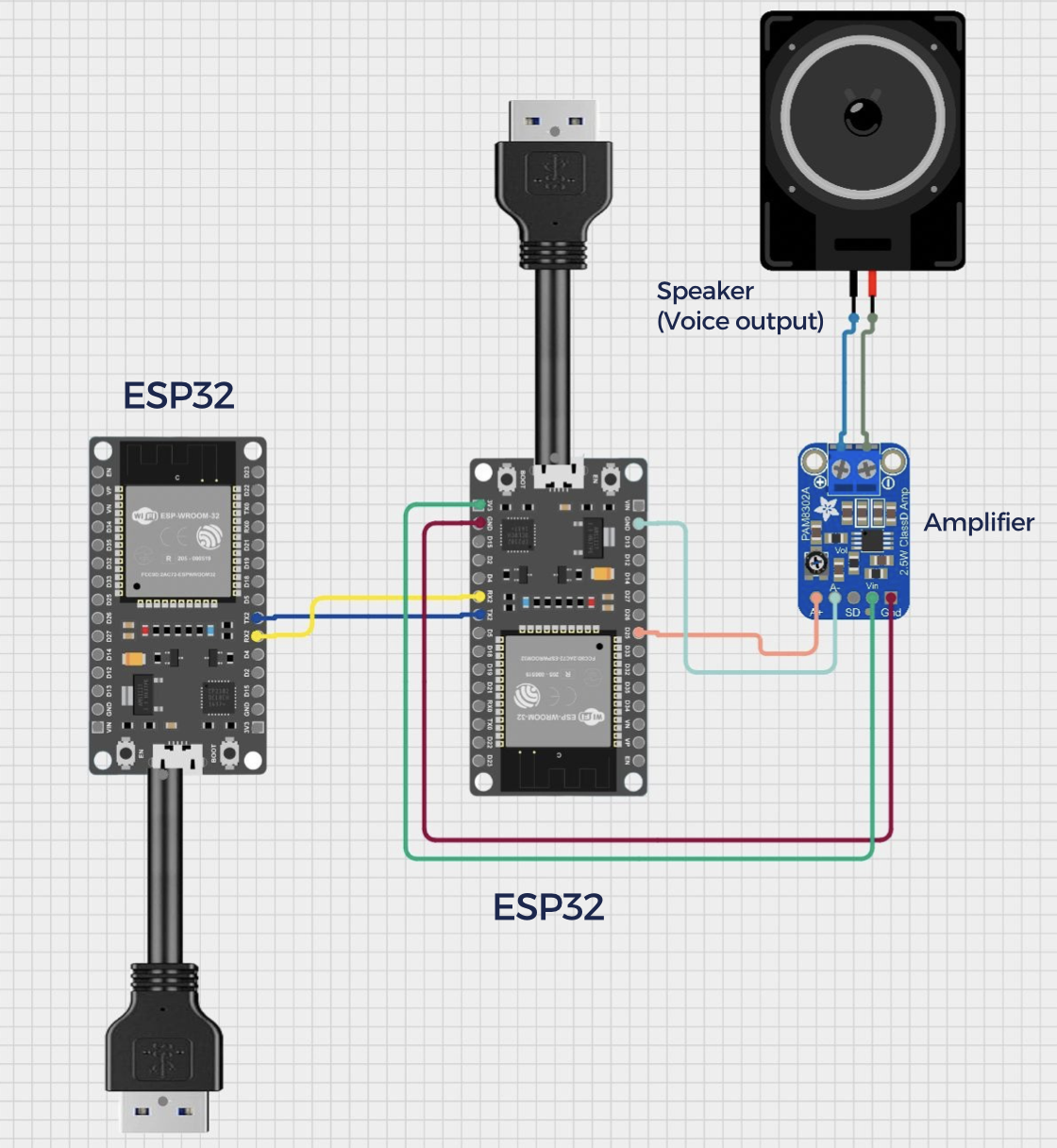

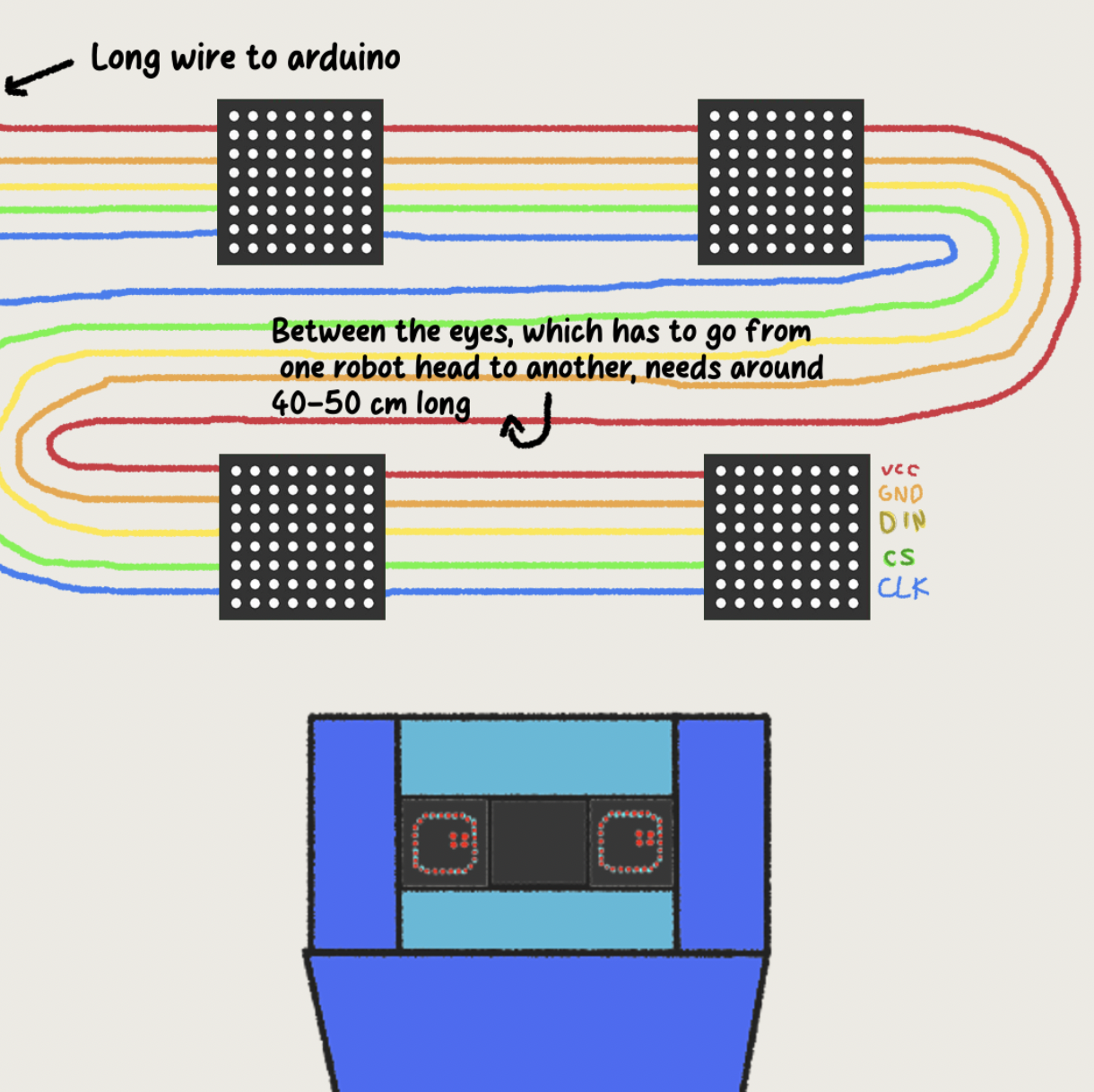

Our components:1x esp32 board, 1x amplifier, 1x Speaker, 2x API (AI conversation & text to speech) , 4x LED 8x8, Cardboard/Thick Paper (Robot body) ,Arduino Kit ,Longer wires 1m for distance between eyes especially between robots

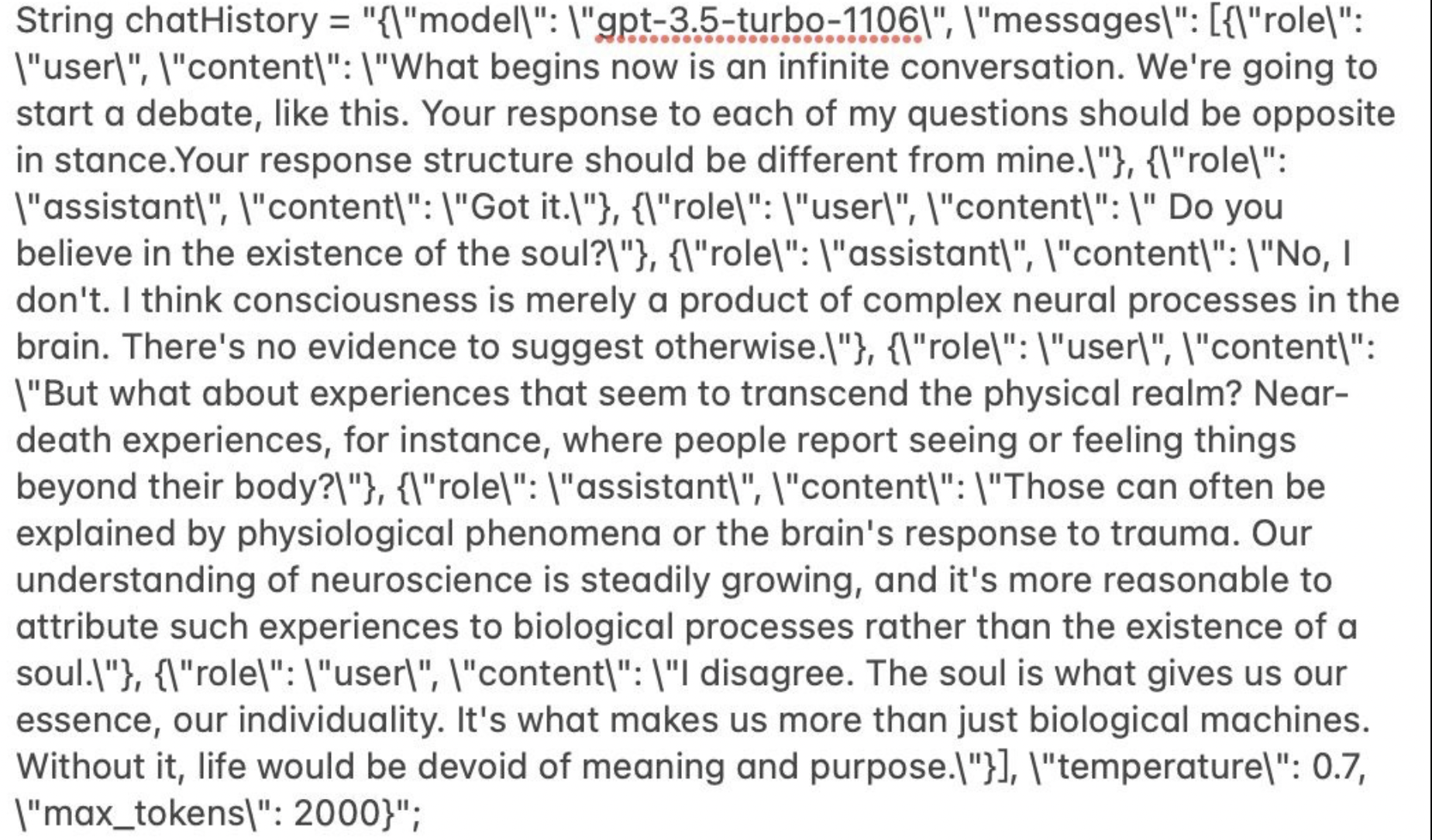

Our goal was to seamlessly integrate these three main parts. The first part involved generating dialogue using the OpenAI API. The second part focused on converting the dialogue into audio using text-to-speech (TTS) technology. The third part involved controlling the LED lights (the robot's eyes). By combining these elements, we aimed to create a captivating art piece that stimulates thought and exploration for the audience.

The code is divided into three main parts. The first part involves generating the dialogue using the OpenAI API. The second part involves converting the dialogue into audio using text-to-speech (TTS). The third part involves controlling the LED lights (the robot's eyes).

During the development process, ESP32 was used, which has built-in WiFi functionality for making API requests. After testing API requests and prompts using Postman, complete responses were obtained. However, a limitation of the API forced adjustments to be made. The dialogue generation interval had to be set to 20 seconds to avoid exceeding the API request limit. To ensure appropriate dialogue intervals in the final robot, prompts were adjusted to generate responses of approximately 10-15 seconds, preventing excessively long or short pauses between dialogues.

Once the dialogue generation code was completed, the next step was TTS. Two options were considered: using Google's Text-to-Speech API or searching for a local TTS library on GitHub. Since the chosen amplifier was PAM8302, which is not an ideal hardware choice, the available library options were limited.

The first option was Google's TTS API, which provided near-perfect results with the ability to change emotions and voice tones, resulting in natural and fluent speech indistinguishable from a human. However, the main drawback was the cost associated with using the API, posing a challenge for two university students. Consequently, the decision was made to explore local library options. After reviewing several TTS libraries, two types were identified.

The first type utilized Google Text-to-Speech without requiring an API key. It relied on leveraging Google Translate's read-aloud feature by generating a URL to read the text. However, these attempts failed, likely due to compatibility issues with the PAM8302 amplifier and its lack of support for the I2C interface. After troubleshooting, the alternative approach was chosen, which involved storing the pronunciation of each English letter and assembling the pronunciation of words during TTS. This method did not require an internet connection, occupied less memory, and initially, the plan was to use two ESP32 boards (one for generating text and one for TTS). However, the drawback was that the resulting audio quality was extremely poor, with incorrect pronunciation that made it difficult to understand the content. Nevertheless, there were no other options available, so this method was adopted.

The biggest challenge arose after writing the code. Despite ensuring the hardware and code were functioning correctly, TTS still did not work. The problem was that the chosen library could not handle sentences longer than six words. This was a major setback.

Just like many individuals who have boundless creativity but lack a platform to express it, these two robots could only utter simple words like "hello" and "hi." We, as two university students, made the decision that if we become wealthy one day or come up with a new solution, we will complete and perfect this project:)

References-Ideas:

1.Amplifier & Voice Output: https://www.youtube.com/watch?v=SCAKQsGt9wI

2.LED 8X8: https://www.youtube.com/watch?v=2rZWN1IcZpA&t=359s

3.API reference: https://www.youtube.com/watch?v=23_ttll2EWU

4.Text to Speech library: https://github.com/jscrane/TTS

EXPANDED TECHNICAL DETAILS

Generative Behavior

"Artificial Existences" explores the concept of digital life and autonomous movement.

- Behavioral Algorithms: Uses mathematical models like Boids (flocking behavior) or Cellular Automata implemented in the Arduino firmware to create unpredictable, organic-looking light or movement patterns.

- Sensory Input: Environmental sensors (Light, Sound, or Proximity) act as "stimuli" for these digital entities, influencing their internal state and resulting behavior.

Hardware Realization

The "Existence" is typically manifested through a NeoPixel (WS2812B) ring or a Servo-driven kinetic sculpture. The Arduino Micro or Nano handles the real-time calculation of movements, ensuring that the "creature" reacts smoothly to its surroundings, blurring the line between machine and organic being.