Elephants are the largest land mammals and are highly sensitive and caring animals, much like humans. They are highly intelligent animals with complex emotions, feelings, compassion and self-awareness (elephants are one of very few species to recognize themselves in a mirror!). They pick up sounds of rumbles with their feet and they can hear low-frequency communications over long distances though the vibrations that come up through their feet and into their ears. Like humans, elephants mourn the death of their love ones. An elephant never forgets.

But these wonderful creatures are in grave danger. Once common throughout Africa and Asia, elephant numbers fell dramatically in the 19th and 20th centuries, largely due to the ivory trade and habitat loss. While some populations are now stable, poaching, human-wildlife conflict and habitat destruction continue to threaten the species.

African elephant populations have fallen from an estimated 12 million a century ago to some 400, 000. In recent years, at least 20, 000 elephants have been killed in Africa each year for their tusks. African forest elephants have been the worst hit. Their populations declined by 62% between 2002-2011 and they have lost 30% of their geographical range, with African savanna elephants declining by 30% between 2007-2014. This dramatic decline has continued and even accelerated with cumulative losses of up to 90% in some landscapes between 2011 and 2015. Today, the greatest threat to African elephants is wildlife crime, primarily poaching for the illegal ivory trade, while the greatest threat to Asian elephants is habitat loss, which results in human-elephant conflict.

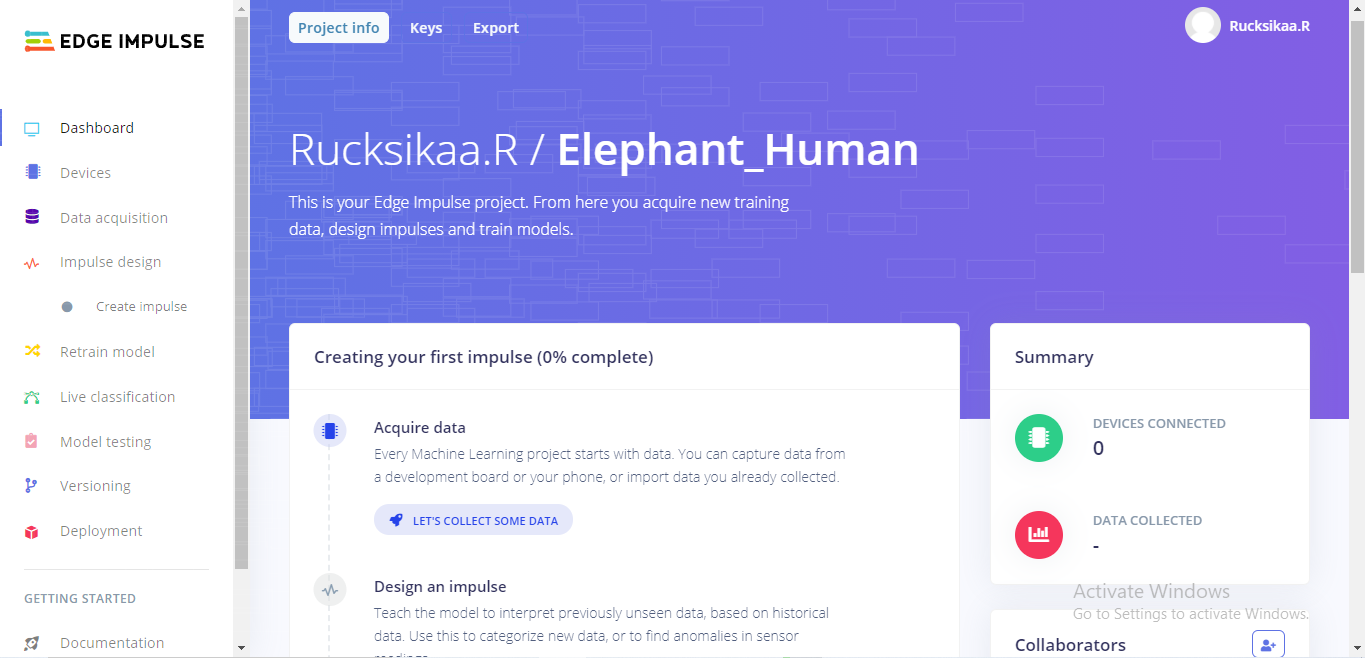

Edge Impulse

Edge Impulse enables developers to create the next generation of intelligent device solutions with embedded machine learning. In this project, we will be creating a machine learning model with the help of Edge impulse. Using some datasets, we will train the model to differentiate between humans and elephants.

Project Perspective

ML Model to differentiate between Humans and Elephants is a sophisticated exploration of conservation technology and AI interaction. By focusing on the essential building blocks—the acoustic-spectrogram-to-class-label mapping and your high-performance Edge-AI-dispatch and neural-sync logic, you'll learn how to communicate and synchronize your wildlife protection tasks using a specialized software logic and robust high-performance setup.

Technical Implementation: Audio Spectrograms and Neural Buffers

The project reveals the hidden layers of simple sensing-to-conservation interaction:

- Identification layer: The Onboard MP34DT05 Microphone acts as a high-resolution acoustic eye, measuring every point of the surrounding sound waves to coordinate the system's dispatch.

- Conversion layer: The system uses a high-speed digital protocol (PDM) to receive high-speed acoustic packets to coordinate mission-critical sensing tasks.

- Conservation Interface layer: A Beacon Light / RFID Tag provides a high-definition visual and data dashboard for every alert status check (e.g. Elephant Nearby, Human/Threat!).

- Communication Gateway layer: Edge Impulse SDK provides a manual data-dispatch or automated model-sync status check during initial calibration to coordinate status.

- Processing Logic logic: The server code follows a "neural-inference-dispatch" (or ml-dispatch) strategy: it interprets real-time sound audio and matches classification labels to provide safe and rhythmic wildlife monitoring.

- Communication Dialogue Loop: Note codes are sent rhythmically to the Serial Monitor during initial calibration to coordinate status.

Hardware-AI Infrastructure

- Arduino Nano 33 BLE Sense: The "brain" of the project, managing multi-directional acoustic sampling and coordinating ML and alert sync.

- Edge Impulse Core: Providing a clear and reliable "Logic Link" for every point of AI assessment.

- High-Sensitivity Mic: Providing a high-capacity and reliable physical interface for every first successful "Wildlife Mission."

- LiPo Power: Essential for providing clear and energy-efficient protection for every point of the remote field operation.

- EZ-Light Beacon: Essential for providing a clear and energy-efficient digital signal path for all points of your data sensing array.

- Micro-USB Cable: Used to program your Arduino and provides the primary interface for the system controller.

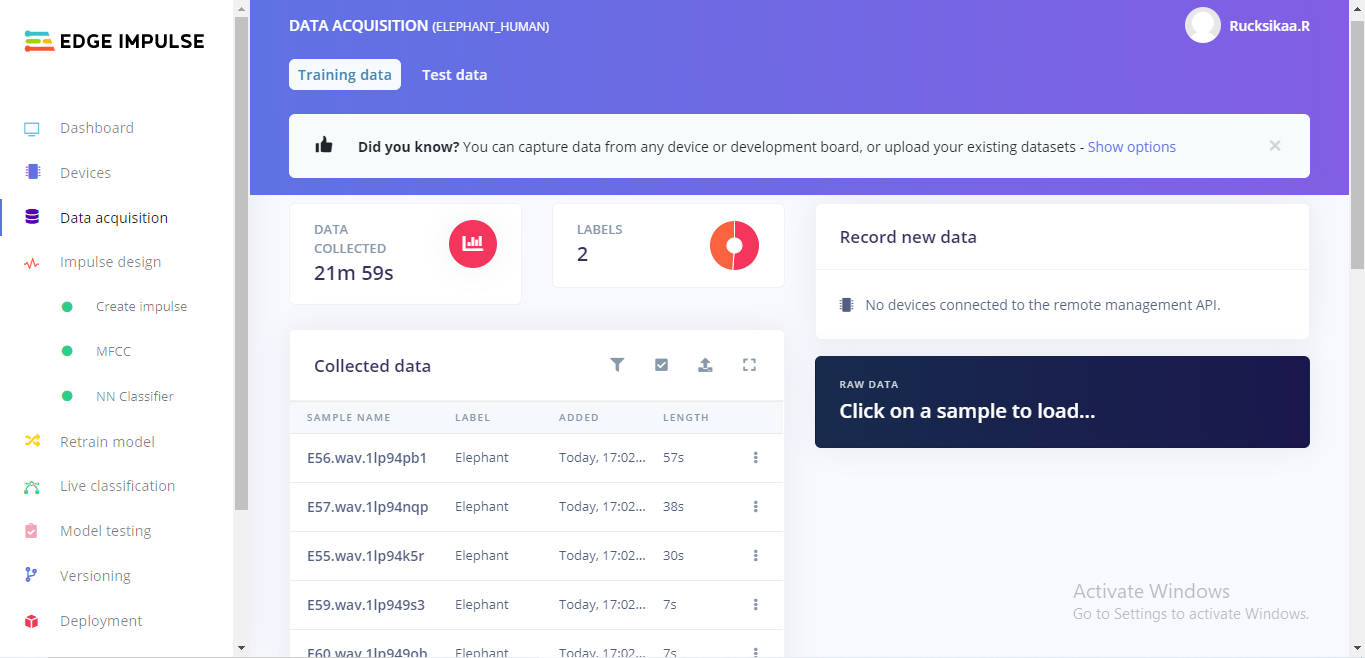

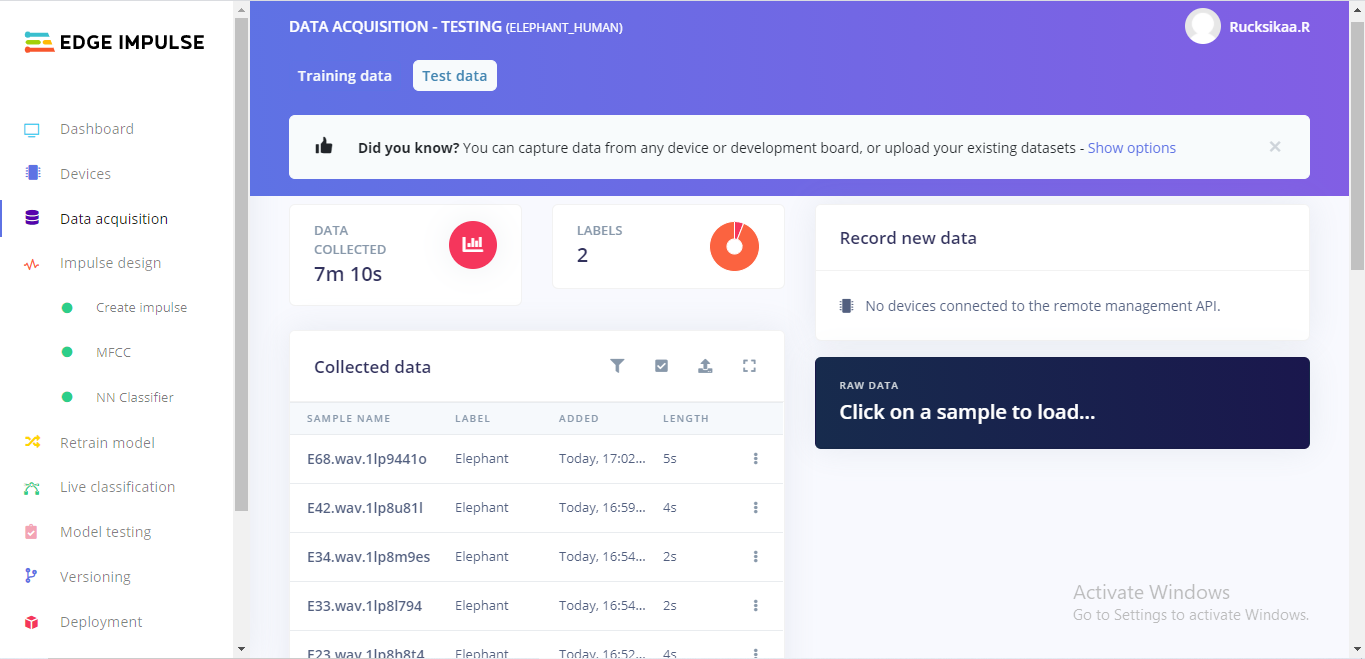

Step 01: Acquire Data

I have not used any devices to capture data. Instead, I created training and test datasets using the sounds from Elephant Voices database and youtube. I have split the sample to avoid the noise and increase the accuracy of the model.

I have created the dataset under two labels: Elephant and Human.

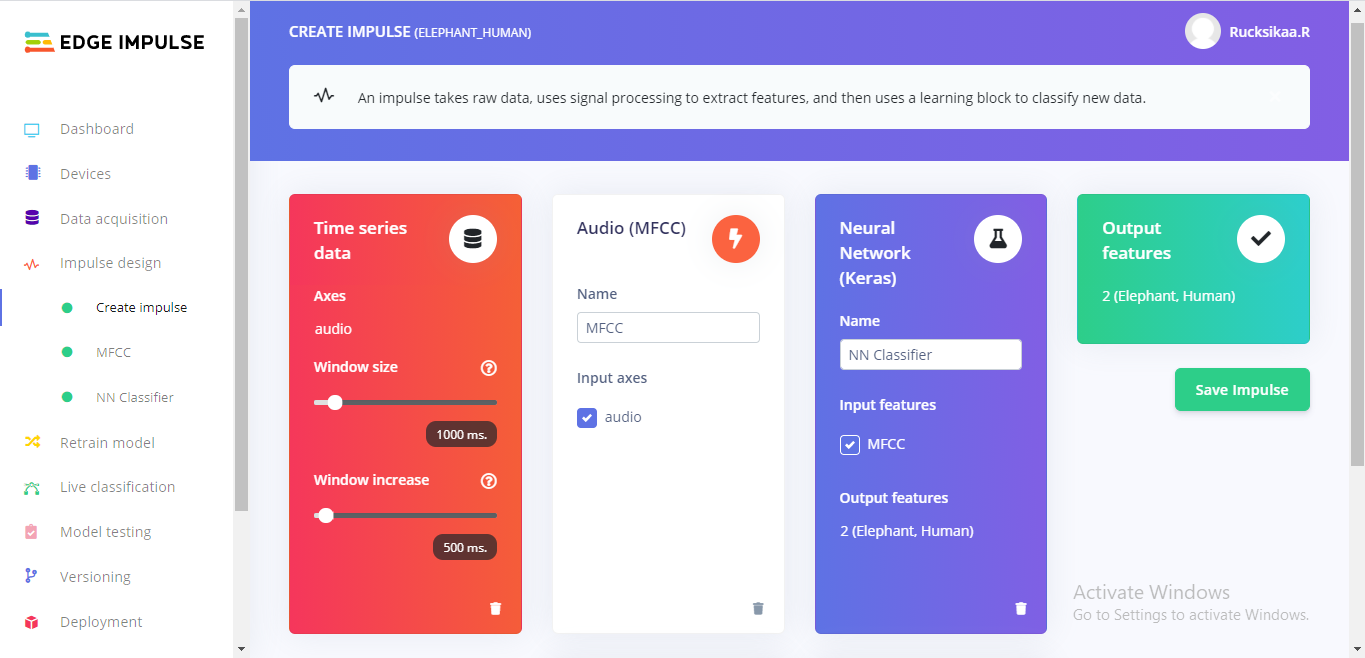

Step 02: Create Impulse

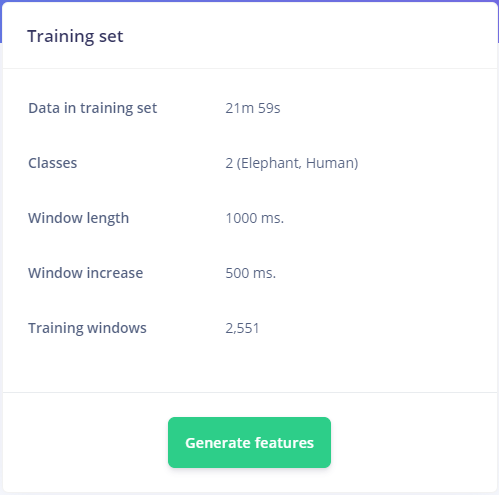

After creating my training dataset, I designed an impulse. An impulse takes the raw data, slices it up in smaller windows, uses signal processing blocks to extract features, and then uses a learning block to classify new data. Signal processing blocks always return the same values for the same input and are used to make raw data easier to process, while learning blocks learn from past experiences.

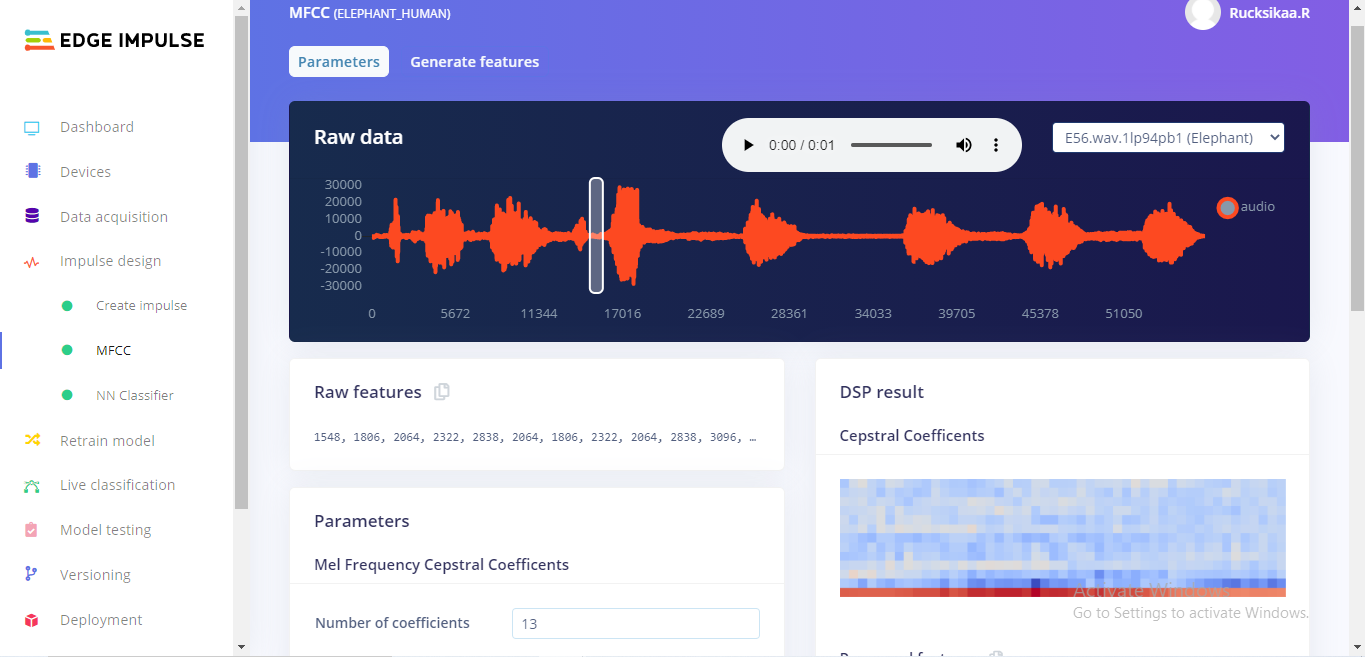

For this project, we will be using "MFCC" signal processing block which extracts features from audio signals using Mel Frequency Cepstral Coefficents.

Then pass this simplified audio data into a Neural Network block, which will learn patterns from data, and can apply these to new data and classify them. This is great for categorizing movement or recognizing audio.

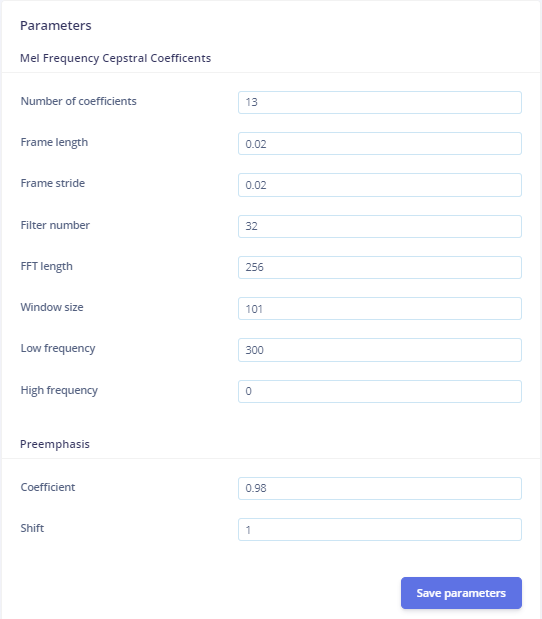

Step 03: MFCC configuration

Do not change the default parameters during the configuration.

Scroll down and click 'Save parameters'. This will redirect you to the 'Generate Features' page.

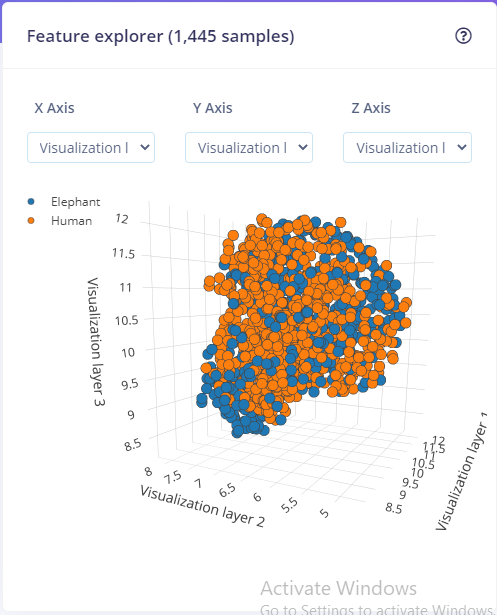

The feature explorer presents you with a visualisation of the generated MFCC.

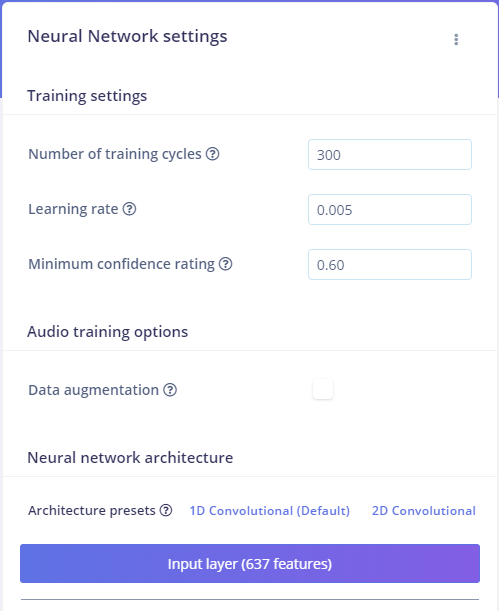

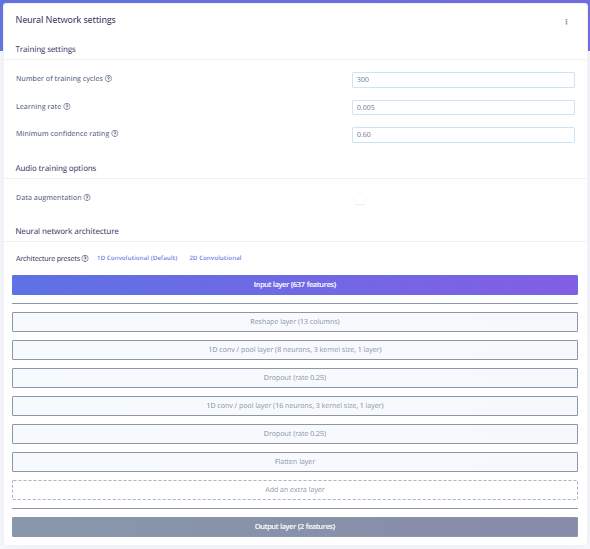

Step 04: Neural Network configuration

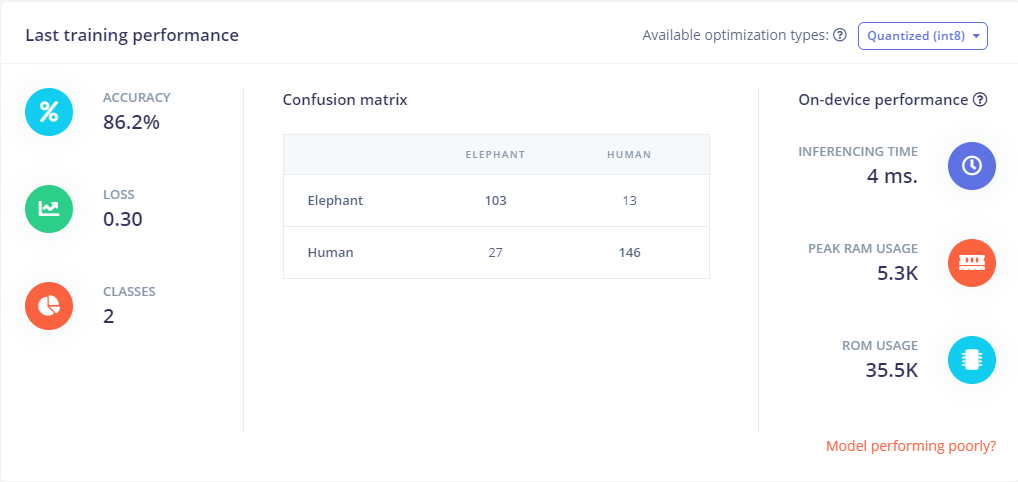

Now, it's time to start training a neural network. Neural networks are algorithms, modeled loosely after the human brain, that can learn to recognize patterns that appear in their training data. The network that we're training here will take the MFCC as an input, and try to map this to one of two classes—elephant and danger.

I had to train my model a few times with different combinations - number of training cycles and neural network architecture presets.

The accuracy of this Machine Learning model can be improved by acquiring more data and we need to have minimum 10 minutes of data for each label.

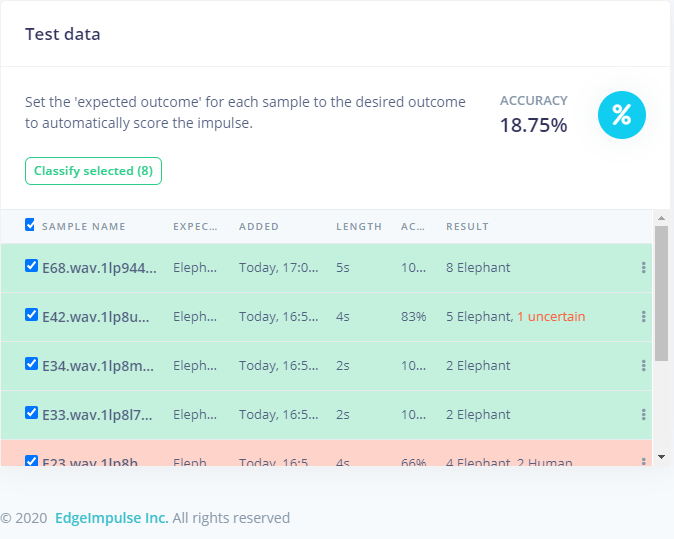

Step 04: Model testing

You can test the validity of your model by this model testing. I tested 8 samples and my model recognised 5 of them. If I had more data under each label, this ML model would have been more accurate.

Step 05: Deployment

The ML model is now ready for deployment. This makes the model run without an internet connection, minimizes latency, and runs with minimal power consumption. You can either create a library or build firmware for your development board.

I have turned my audio classification model into optimized source code that can run on any device, for example: Arduino Nano 33 BLE sense.

The device can be connected to the elephant collar and be implemented to prevent danger and threats to the diminishing elephant population.

Conservation Hub Automation and Interaction Step-by-Step

The proximity-driven detection process is designed to be very user-friendly:

- Initialize Workspace: Correctly seat your Nano BLE and battery inside your weatherproof enclosure and connect it properly to the tree/location.

- Setup High-Speed Sync: In the Edge Impulse Studio, initialize the

Classifier-Modeland define the audio windows insetup(). - Internal Dialogue Loop: The station constantly performs high-performance periodic signal checks and updates the detection status in real-time based on your location and settings.

- Visual and Data Feedback Integration: Watch your beacon automatically become a rhythmic status signal, pulsing and following your location settings from all points of the room.

Final and complete idea

To make things more interesting and effective, an RFID microchip could be fitted to the elephant collar or a passive RFID tag can be attached to the elephant's ear. Each elephant will have a unique ID and with the help of Ultra High frequency antennas and Sparkfun's simultaneous RFID readers, we would be able to detect when the elephant is within a safe distance away from poaching risk areas or places where people reside. Simultaneous RFID readers are capable of reading multiple tags simultaneously. If the elephant is approaching, the RFID reader will be able to detect as it can calculate the distance between the certain RFID tag and the reader. If the elephant is at risk, the park or forest rangers can take the appropriate actions.

The RFID reader can be connected to the microcontroller at around 1 or 2 km away from areas where people live or where poaching activity is high. If the system detects an approaching elephant, the microcontroller is programmed to automatically turn on a beacon light and alert the people residing in that area.

This would also be helpful if the Machine Learning model fails to recognize sounds from the audio recorded by the microphone in the collar or elephant collar's battery has run out of power or if it malfunctions.

Future Expansion

- OLED Identity Dashboard Integration: Add a small OLED display on the enclosure to show "Confidence (%)" or "Battery (%)."

- Multi-sensor Climate Sync Synchronization: Connect a specialized "Bluetooth Tracker" to perform higher-precision "Local Paging" wirelessly via the cloud.

- Cloud Interface Registration Support Synchronization: Add a specialized web-dashboard on your smartphone over WiFi/BT to precisely track and log the total social history.

- Advanced Velocity Profile Customization Support: Add specialized "Machine Learning (vCore)" to the code to allow triggers to be changed automatically based on the user's height!

Wildlife ML Differentiator is a perfect project for any science enthusiast looking for a more interactive and engaging AI tool!

[!IMPORTANT] The ML Model requires an accurate Audio feature mapping (e.g. for MFE vs MFE-v2) in the setup to ensure reliable wildlife classification; always ensure you have an appropriate Fail-Safe flag in the loop if the serial bus overloads!

Reference

- Arapahoe Libraries: https://arapahoelibraries.org/blogs/post/15-reasons-why-elephants-are-the-best/

- World Wildlife Fund (WWF): https://wwf.panda.org/knowledge_hub/endangered_species/elephants/

- World Wildlife Fund (WWF) - Elephant: https://www.worldwildlife.org/species/elephant

- Edge Impulse - Getting started: https://docs.edgeimpulse.com/docs

- Edge Impulse - Recognise sounds from audio: https://docs.edgeimpulse.com/docs/audio-classification