The Reconfigurable Wall is a student project submitted as the final project for the Dynamic Environment studio. Dynamic Environments studio focuses on the development of reconfigurable structures that enhance occupants' social and physical experiences of the built environment. Students were asked to identify problems related to the built environment and propose solutions that take advantage of the reconfigurable structures.

Students: "The initial concept involves altering the human experience through abrupt changes in the built environment. Using a simple cubic space as an example, the user, represented as a yellow circle, interact with the space based on the automated reaction by the space itself. Various phase changes are revealed to the user depending on that user's action, thus making space a labyrinth with outcomes based on algorithmic movement."

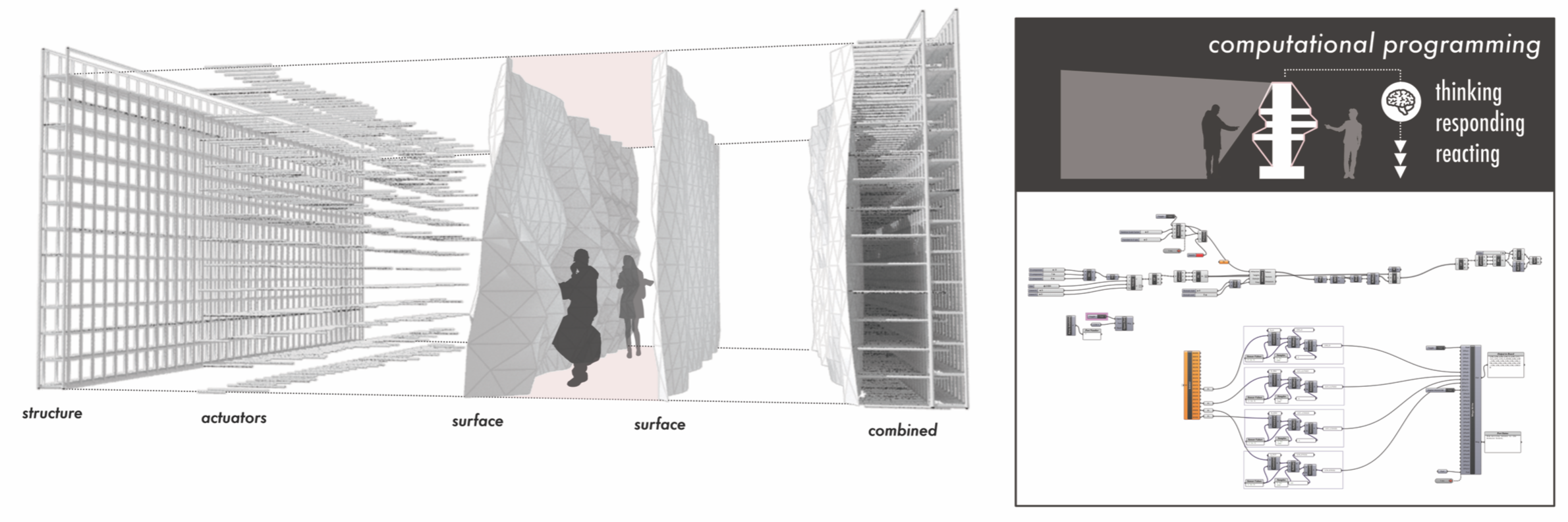

Project Perspective

The Reconfigurable Wall System is a sophisticated exploration of adaptive technology and spatial-human interaction. By focusing on the essential building blocks—the Kinect depth sensor and robotic wall panels—you'll learn how to communicate and synchronize an architectural environment using specialized software logic and a robust Rhino-integrated setup.

Technical Implementation: Spatial Algorithmic Movement and Feedback

The project reveals the hidden layers of simple motion-to-space interaction:

- Identification layer: The Microsoft Kinect acts as a high-resolution spatial eye, measuring the occupant's movement within the 3D room.

- Conversion layer: The Arduino uses the high-speed Firmata protocol to receive spatial vectors from Rhino Grasshopper.

- Interface layer: Grasshopper FireFly acts as a high-definition data dashboard for wall status checks (Open/Closed/Transition).

- Actuation layer: A Linear Actuator Array provides high-resolution mechanical feedback for dynamic labyrinth changes.

- Processing Logic layer: The system code follows a "labyrinth algorithms" (or spatial-loop) strategy: it interprets the occupant's position data and matches wall configurations to provide a safe and rhythmic architectural response.

Hardware-Architectural Infrastructure

- Arduino Uno: The "brain" of the project, managing multi-directional servo sampling and coordinating spatial data sync.

- Microsoft Kinect: Providing a high-speed and reliable "Sensing Link" for the installation.

- Robotic Wall Panels: Providing high-precision and reliable "Adaptive Fabric" for the spatial mission.

- FireFly Plugin: Essential for providing a clear and energy-efficient computational platform for sensor-logic.

- USB B Cable: Used to program the Arduino and provides the primary interface for the system controller.

- 24V 10A Power Supply: Provides a clear and professional physical interface for each actuator mission.

Wall Automation and Interaction Step-by-Step

The reconfigurable wall simulation process is designed to be very efficient:

- Initialize Workspace: Correctly set up the Kinect and Arduino Uno on the installation frame and connect the actuators properly.

- Setup Output Sync: In Rhino Grasshopper, initialize the

FireFlycomponents and define the Firmata port to coordinate the motion. - Internal Dialogue Loop: The wall constantly performs high-performance depth checks and updates panel positions in real-time based on occupant movement.

- Visual and Computational Feedback Integration: Watch the labyrinth dashboard and Rhino preview automatically become a rhythmic status signal, pulsing and following spatial settings from a distance.

[!IMPORTANT] The Linear Actuators require high power and a stable 24V supply; the Arduino's base 5V pin will not be enough to trigger the mechanical walls!

Future Expansion

- OLED Identity Dashboard Integration: Add a small OLED display to show "Wall Status" or "Battery (%)".

- Multi-sensor Climate Sync Synchronization: Connect specialized "Ultrasonic Sensors" to perform higher-precision "Local Proximity" responses wirelessly via the cloud.

- Cloud Interface Registration Support Synchronization: Add a specialized web-dashboard on a smartphone over WiFi/BT to precisely track and log total motion history.

- Advanced Velocity Profile Customization Support: Add specialized "Machine Learning (vCore)" to the code to allow the labyrinth to learn occupant patterns automatically for personalized spatial experiences.

The Reconfigurable Wall System is a perfect project for any science enthusiast looking for a more interactive and engaging architectural tool!